Key Insights

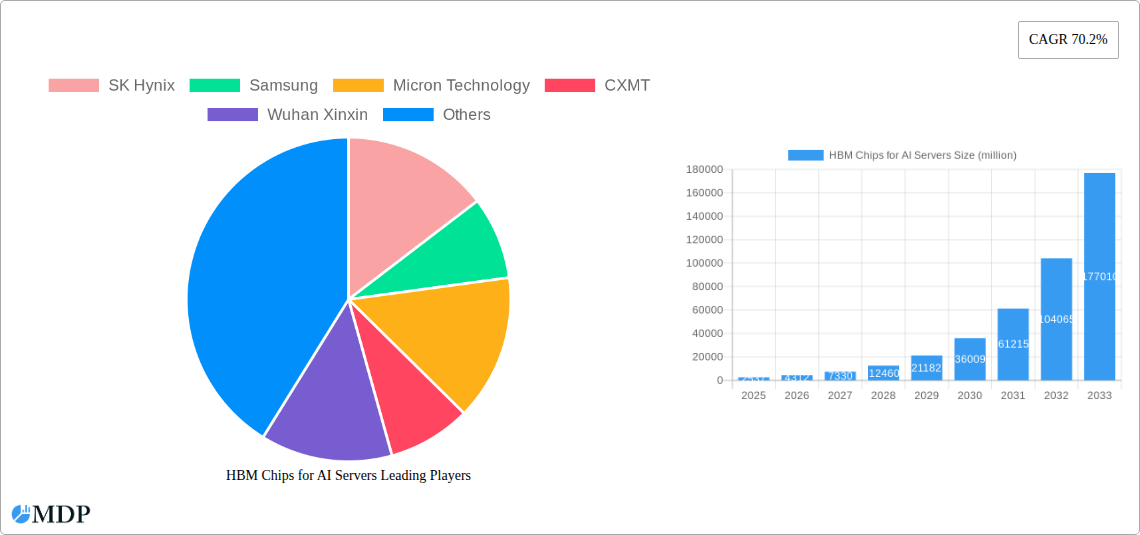

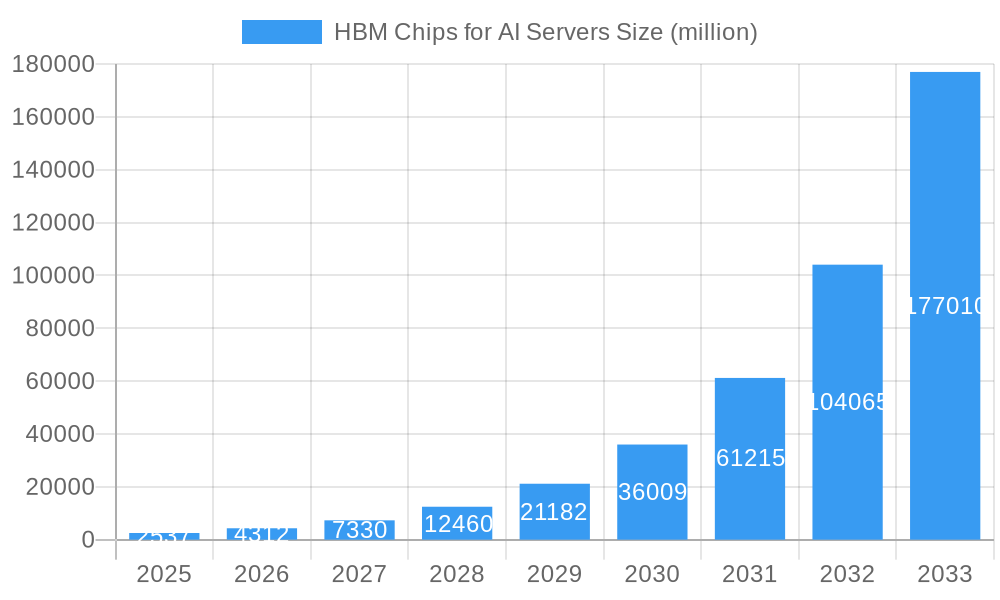

The market for HBM (High Bandwidth Memory) chips specifically designed for AI servers is experiencing explosive growth, projected to reach $2.537 billion in 2025 and exhibiting a remarkable Compound Annual Growth Rate (CAGR) of 70.2%. This surge is primarily driven by the escalating demand for high-performance computing capabilities in AI applications, particularly in deep learning and large language models. The increasing complexity of AI algorithms necessitates significantly faster memory bandwidth, a critical need perfectly addressed by HBM's stacked die architecture. Key industry players like SK Hynix, Samsung, Micron Technology, CXMT, and Wuhan Xinxin are actively investing in R&D and expanding their production capacities to meet this burgeoning demand. The trend towards larger and more sophisticated AI models, coupled with the adoption of advanced server architectures optimized for HBM, will further fuel market expansion. While potential restraints could include supply chain challenges and the high cost of HBM chips, the overall market outlook remains exceptionally positive, with projections indicating continued robust growth through 2033.

HBM Chips for AI Servers Market Size (In Billion)

The sustained growth in the AI server market is directly correlated to the increasing adoption of HBM chips. The limitations of traditional DRAM in handling the massive data processing requirements of modern AI workloads are clearly surpassed by HBM's superior bandwidth. This technology advantage, combined with ongoing advancements in HBM density and performance, will further consolidate its position as the memory solution of choice for high-end AI servers. The forecast period (2025-2033) anticipates a significant expansion, fueled by the continued growth of AI applications across diverse sectors, from autonomous vehicles and healthcare to finance and scientific research. The competitive landscape, characterized by established players and emerging contenders, will likely see further consolidation and innovation as the market matures.

HBM Chips for AI Servers Company Market Share

Unlocking Explosive Growth: The Comprehensive Report on HBM Chips for AI Servers (2019-2033)

This in-depth report provides a comprehensive analysis of the High Bandwidth Memory (HBM) chips market for AI servers, projecting a multi-billion dollar opportunity. From market dynamics and leading players to future growth potential, this report is an invaluable resource for industry stakeholders, investors, and technology enthusiasts. The study period covers 2019-2033, with 2025 serving as both the base and estimated year. The forecast period spans 2025-2033, while the historical period encompasses 2019-2024. Expect actionable insights and data-driven predictions to inform your strategic decision-making.

HBM Chips for AI Servers Market Dynamics & Concentration

The HBM chips market for AI servers is experiencing rapid expansion, driven by the insatiable demand for high-performance computing in AI applications. Market concentration is currently moderate, with key players like SK Hynix, Samsung, and Micron Technology holding significant market share. However, the emergence of companies like CXMT and Wuhan Xinxin is intensifying competition. The market is characterized by continuous innovation, with companies investing heavily in R&D to develop faster, higher-capacity HBM chips. Regulatory frameworks, while not overly restrictive, play a role in influencing market access and trade. Product substitutes, such as GDDR6 memory, exist but lack the bandwidth crucial for demanding AI workloads. The increasing adoption of AI across various industries fuels end-user demand, while M&A activity remains relatively moderate, with approximately xx major deals in the historical period, indicating a potential for future consolidation. The market share in 2025 is estimated to be distributed as follows: SK Hynix (xx%), Samsung (xx%), Micron Technology (xx%), CXMT (xx%), Wuhan Xinxin (xx%), and Others (xx%).

HBM Chips for AI Servers Industry Trends & Analysis

The HBM chips market for AI servers is poised for substantial growth, projected to reach $xx million by 2033. This remarkable expansion is fueled by several key trends. The proliferation of large language models (LLMs) and generative AI necessitates memory solutions with exceptionally high bandwidth, making HBM chips indispensable. The increasing prevalence of high-performance computing (HPC) applications and the growing adoption of cloud computing further accelerate demand. Technological disruptions, such as advancements in stacking technology and 3D packaging, continue to push performance boundaries and drive market penetration. Consumer preferences are shifting towards greater data processing speeds and lower latency, which HBM chips effectively address. Competitive dynamics are shaped by ongoing innovation, capacity expansion, and strategic partnerships. The Compound Annual Growth Rate (CAGR) is estimated at xx% during the forecast period, with market penetration expected to reach xx% by 2033.

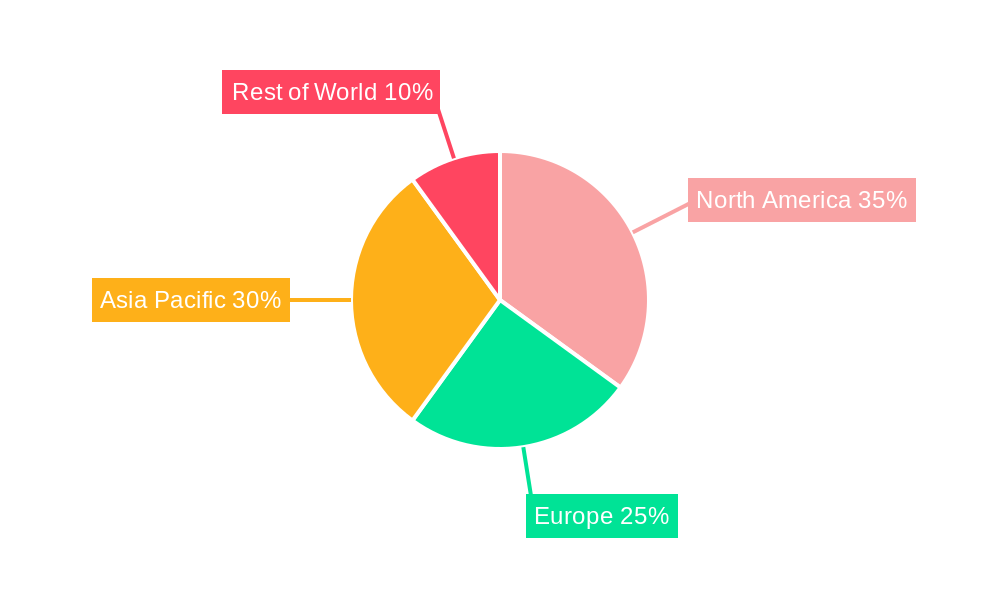

Leading Markets & Segments in HBM Chips for AI Servers

The North American market currently dominates the HBM chips for AI servers landscape, driven by robust technological advancements and significant investments in the AI sector. This dominance is supported by:

- Favorable Regulatory Environment: Supportive government policies and funding initiatives stimulate innovation and deployment.

- Strong Infrastructure: Advanced data centers and robust internet connectivity provide ideal conditions for AI applications.

- High Adoption Rates: Early adoption of cloud-based AI services and HPC solutions drives demand.

While North America leads, significant growth potential exists in the Asia-Pacific region, particularly in China, driven by rapidly expanding domestic AI industries and government support. The data center segment is currently the largest consumer of HBM chips, but significant growth is expected in the edge computing and high-performance computing sectors.

HBM Chips for AI Servers Product Developments

Recent years have witnessed significant advancements in HBM chip technology. Higher density, wider data buses, and faster speeds are constantly being developed to meet the growing demands of AI applications. This includes innovations in packaging technologies that enable greater integration and improved performance. Manufacturers are focusing on optimizing power efficiency and reducing costs while maintaining high performance levels, driving broader adoption. New applications in areas beyond traditional server applications are further enhancing market reach.

Key Drivers of HBM Chips for AI Servers Growth

The growth of the HBM chips market for AI servers is primarily fueled by the surging demand for AI-powered applications across diverse industries. Technological advancements, such as the development of high-bandwidth memory chips, have played a pivotal role. Furthermore, supportive government policies and substantial investments in AI infrastructure further accelerate market growth.

Challenges in the HBM Chips for AI Servers Market

Despite significant growth potential, several challenges hinder market expansion. Supply chain disruptions can cause production delays and price volatility. The high cost of HBM chips poses a significant barrier to entry for some users. Intense competition from established players and emerging competitors necessitates continuous innovation and cost optimization. These factors impact the market’s overall growth rate. The total impact is estimated to reduce the market size by approximately $xx million by 2033.

Emerging Opportunities in HBM Chips for AI Servers

The HBM chips market for AI servers presents significant long-term growth opportunities. Technological breakthroughs, such as the development of higher-capacity and lower-power HBM chips, will further expand the addressable market. Strategic partnerships between chip manufacturers and AI solution providers will accelerate product adoption. Expansion into new market segments, such as edge computing and autonomous vehicles, offer substantial growth potential.

Leading Players in the HBM Chips for AI Servers Sector

- SK Hynix

- Samsung

- Micron Technology

- CXMT

- Wuhan Xinxin

Key Milestones in HBM Chips for AI Servers Industry

- 2020: Introduction of HBM2e chips with increased capacity and bandwidth.

- 2022: Announcement of next-generation HBM3 specifications with significant performance improvements.

- 2023: Several companies launched their HBM3-based products.

- 2024: Significant increase in production capacity for HBM chips.

Strategic Outlook for HBM Chips for AI Servers Market

The HBM chips market for AI servers holds immense potential for future growth. Continued technological advancements, expanding applications, and strategic collaborations will drive substantial market expansion. Focus on high-capacity, high-bandwidth, and low-power consumption chips will shape the competitive landscape and provide substantial value to consumers. Investors and stakeholders should closely monitor industry developments to capitalize on emerging opportunities.

HBM Chips for AI Servers Segmentation

-

1. Application

- 1.1. CPU+GPU Servers

- 1.2. CPU+FPGA Servers

- 1.3. CPU+ASIC Servers

- 1.4. Others

-

2. Types

- 2.1. HBM2

- 2.2. HBM2E

- 2.3. HBM3

- 2.4. HBM3E

- 2.5. Others

HBM Chips for AI Servers Segmentation By Geography

-

1. North America

- 1.1. United States

- 1.2. Canada

- 1.3. Mexico

-

2. South America

- 2.1. Brazil

- 2.2. Argentina

- 2.3. Rest of South America

-

3. Europe

- 3.1. United Kingdom

- 3.2. Germany

- 3.3. France

- 3.4. Italy

- 3.5. Spain

- 3.6. Russia

- 3.7. Benelux

- 3.8. Nordics

- 3.9. Rest of Europe

-

4. Middle East & Africa

- 4.1. Turkey

- 4.2. Israel

- 4.3. GCC

- 4.4. North Africa

- 4.5. South Africa

- 4.6. Rest of Middle East & Africa

-

5. Asia Pacific

- 5.1. China

- 5.2. India

- 5.3. Japan

- 5.4. South Korea

- 5.5. ASEAN

- 5.6. Oceania

- 5.7. Rest of Asia Pacific

HBM Chips for AI Servers Regional Market Share

Geographic Coverage of HBM Chips for AI Servers

HBM Chips for AI Servers REPORT HIGHLIGHTS

| Aspects | Details |

|---|---|

| Study Period | 2020-2034 |

| Base Year | 2025 |

| Estimated Year | 2026 |

| Forecast Period | 2026-2034 |

| Historical Period | 2020-2025 |

| Growth Rate | CAGR of 70.2% from 2020-2034 |

| Segmentation |

|

Table of Contents

- 1. Introduction

- 1.1. Research Scope

- 1.2. Market Segmentation

- 1.3. Research Methodology

- 1.4. Definitions and Assumptions

- 2. Executive Summary

- 2.1. Introduction

- 3. Market Dynamics

- 3.1. Introduction

- 3.2. Market Drivers

- 3.3. Market Restrains

- 3.4. Market Trends

- 4. Market Factor Analysis

- 4.1. Porters Five Forces

- 4.2. Supply/Value Chain

- 4.3. PESTEL analysis

- 4.4. Market Entropy

- 4.5. Patent/Trademark Analysis

- 5. Global HBM Chips for AI Servers Analysis, Insights and Forecast, 2020-2032

- 5.1. Market Analysis, Insights and Forecast - by Application

- 5.1.1. CPU+GPU Servers

- 5.1.2. CPU+FPGA Servers

- 5.1.3. CPU+ASIC Servers

- 5.1.4. Others

- 5.2. Market Analysis, Insights and Forecast - by Types

- 5.2.1. HBM2

- 5.2.2. HBM2E

- 5.2.3. HBM3

- 5.2.4. HBM3E

- 5.2.5. Others

- 5.3. Market Analysis, Insights and Forecast - by Region

- 5.3.1. North America

- 5.3.2. South America

- 5.3.3. Europe

- 5.3.4. Middle East & Africa

- 5.3.5. Asia Pacific

- 5.1. Market Analysis, Insights and Forecast - by Application

- 6. North America HBM Chips for AI Servers Analysis, Insights and Forecast, 2020-2032

- 6.1. Market Analysis, Insights and Forecast - by Application

- 6.1.1. CPU+GPU Servers

- 6.1.2. CPU+FPGA Servers

- 6.1.3. CPU+ASIC Servers

- 6.1.4. Others

- 6.2. Market Analysis, Insights and Forecast - by Types

- 6.2.1. HBM2

- 6.2.2. HBM2E

- 6.2.3. HBM3

- 6.2.4. HBM3E

- 6.2.5. Others

- 6.1. Market Analysis, Insights and Forecast - by Application

- 7. South America HBM Chips for AI Servers Analysis, Insights and Forecast, 2020-2032

- 7.1. Market Analysis, Insights and Forecast - by Application

- 7.1.1. CPU+GPU Servers

- 7.1.2. CPU+FPGA Servers

- 7.1.3. CPU+ASIC Servers

- 7.1.4. Others

- 7.2. Market Analysis, Insights and Forecast - by Types

- 7.2.1. HBM2

- 7.2.2. HBM2E

- 7.2.3. HBM3

- 7.2.4. HBM3E

- 7.2.5. Others

- 7.1. Market Analysis, Insights and Forecast - by Application

- 8. Europe HBM Chips for AI Servers Analysis, Insights and Forecast, 2020-2032

- 8.1. Market Analysis, Insights and Forecast - by Application

- 8.1.1. CPU+GPU Servers

- 8.1.2. CPU+FPGA Servers

- 8.1.3. CPU+ASIC Servers

- 8.1.4. Others

- 8.2. Market Analysis, Insights and Forecast - by Types

- 8.2.1. HBM2

- 8.2.2. HBM2E

- 8.2.3. HBM3

- 8.2.4. HBM3E

- 8.2.5. Others

- 8.1. Market Analysis, Insights and Forecast - by Application

- 9. Middle East & Africa HBM Chips for AI Servers Analysis, Insights and Forecast, 2020-2032

- 9.1. Market Analysis, Insights and Forecast - by Application

- 9.1.1. CPU+GPU Servers

- 9.1.2. CPU+FPGA Servers

- 9.1.3. CPU+ASIC Servers

- 9.1.4. Others

- 9.2. Market Analysis, Insights and Forecast - by Types

- 9.2.1. HBM2

- 9.2.2. HBM2E

- 9.2.3. HBM3

- 9.2.4. HBM3E

- 9.2.5. Others

- 9.1. Market Analysis, Insights and Forecast - by Application

- 10. Asia Pacific HBM Chips for AI Servers Analysis, Insights and Forecast, 2020-2032

- 10.1. Market Analysis, Insights and Forecast - by Application

- 10.1.1. CPU+GPU Servers

- 10.1.2. CPU+FPGA Servers

- 10.1.3. CPU+ASIC Servers

- 10.1.4. Others

- 10.2. Market Analysis, Insights and Forecast - by Types

- 10.2.1. HBM2

- 10.2.2. HBM2E

- 10.2.3. HBM3

- 10.2.4. HBM3E

- 10.2.5. Others

- 10.1. Market Analysis, Insights and Forecast - by Application

- 11. Competitive Analysis

- 11.1. Global Market Share Analysis 2025

- 11.2. Company Profiles

- 11.2.1 SK Hynix

- 11.2.1.1. Overview

- 11.2.1.2. Products

- 11.2.1.3. SWOT Analysis

- 11.2.1.4. Recent Developments

- 11.2.1.5. Financials (Based on Availability)

- 11.2.2 Samsung

- 11.2.2.1. Overview

- 11.2.2.2. Products

- 11.2.2.3. SWOT Analysis

- 11.2.2.4. Recent Developments

- 11.2.2.5. Financials (Based on Availability)

- 11.2.3 Micron Technology

- 11.2.3.1. Overview

- 11.2.3.2. Products

- 11.2.3.3. SWOT Analysis

- 11.2.3.4. Recent Developments

- 11.2.3.5. Financials (Based on Availability)

- 11.2.4 CXMT

- 11.2.4.1. Overview

- 11.2.4.2. Products

- 11.2.4.3. SWOT Analysis

- 11.2.4.4. Recent Developments

- 11.2.4.5. Financials (Based on Availability)

- 11.2.5 Wuhan Xinxin

- 11.2.5.1. Overview

- 11.2.5.2. Products

- 11.2.5.3. SWOT Analysis

- 11.2.5.4. Recent Developments

- 11.2.5.5. Financials (Based on Availability)

- 11.2.1 SK Hynix

List of Figures

- Figure 1: Global HBM Chips for AI Servers Revenue Breakdown (million, %) by Region 2025 & 2033

- Figure 2: North America HBM Chips for AI Servers Revenue (million), by Application 2025 & 2033

- Figure 3: North America HBM Chips for AI Servers Revenue Share (%), by Application 2025 & 2033

- Figure 4: North America HBM Chips for AI Servers Revenue (million), by Types 2025 & 2033

- Figure 5: North America HBM Chips for AI Servers Revenue Share (%), by Types 2025 & 2033

- Figure 6: North America HBM Chips for AI Servers Revenue (million), by Country 2025 & 2033

- Figure 7: North America HBM Chips for AI Servers Revenue Share (%), by Country 2025 & 2033

- Figure 8: South America HBM Chips for AI Servers Revenue (million), by Application 2025 & 2033

- Figure 9: South America HBM Chips for AI Servers Revenue Share (%), by Application 2025 & 2033

- Figure 10: South America HBM Chips for AI Servers Revenue (million), by Types 2025 & 2033

- Figure 11: South America HBM Chips for AI Servers Revenue Share (%), by Types 2025 & 2033

- Figure 12: South America HBM Chips for AI Servers Revenue (million), by Country 2025 & 2033

- Figure 13: South America HBM Chips for AI Servers Revenue Share (%), by Country 2025 & 2033

- Figure 14: Europe HBM Chips for AI Servers Revenue (million), by Application 2025 & 2033

- Figure 15: Europe HBM Chips for AI Servers Revenue Share (%), by Application 2025 & 2033

- Figure 16: Europe HBM Chips for AI Servers Revenue (million), by Types 2025 & 2033

- Figure 17: Europe HBM Chips for AI Servers Revenue Share (%), by Types 2025 & 2033

- Figure 18: Europe HBM Chips for AI Servers Revenue (million), by Country 2025 & 2033

- Figure 19: Europe HBM Chips for AI Servers Revenue Share (%), by Country 2025 & 2033

- Figure 20: Middle East & Africa HBM Chips for AI Servers Revenue (million), by Application 2025 & 2033

- Figure 21: Middle East & Africa HBM Chips for AI Servers Revenue Share (%), by Application 2025 & 2033

- Figure 22: Middle East & Africa HBM Chips for AI Servers Revenue (million), by Types 2025 & 2033

- Figure 23: Middle East & Africa HBM Chips for AI Servers Revenue Share (%), by Types 2025 & 2033

- Figure 24: Middle East & Africa HBM Chips for AI Servers Revenue (million), by Country 2025 & 2033

- Figure 25: Middle East & Africa HBM Chips for AI Servers Revenue Share (%), by Country 2025 & 2033

- Figure 26: Asia Pacific HBM Chips for AI Servers Revenue (million), by Application 2025 & 2033

- Figure 27: Asia Pacific HBM Chips for AI Servers Revenue Share (%), by Application 2025 & 2033

- Figure 28: Asia Pacific HBM Chips for AI Servers Revenue (million), by Types 2025 & 2033

- Figure 29: Asia Pacific HBM Chips for AI Servers Revenue Share (%), by Types 2025 & 2033

- Figure 30: Asia Pacific HBM Chips for AI Servers Revenue (million), by Country 2025 & 2033

- Figure 31: Asia Pacific HBM Chips for AI Servers Revenue Share (%), by Country 2025 & 2033

List of Tables

- Table 1: Global HBM Chips for AI Servers Revenue million Forecast, by Application 2020 & 2033

- Table 2: Global HBM Chips for AI Servers Revenue million Forecast, by Types 2020 & 2033

- Table 3: Global HBM Chips for AI Servers Revenue million Forecast, by Region 2020 & 2033

- Table 4: Global HBM Chips for AI Servers Revenue million Forecast, by Application 2020 & 2033

- Table 5: Global HBM Chips for AI Servers Revenue million Forecast, by Types 2020 & 2033

- Table 6: Global HBM Chips for AI Servers Revenue million Forecast, by Country 2020 & 2033

- Table 7: United States HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 8: Canada HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 9: Mexico HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 10: Global HBM Chips for AI Servers Revenue million Forecast, by Application 2020 & 2033

- Table 11: Global HBM Chips for AI Servers Revenue million Forecast, by Types 2020 & 2033

- Table 12: Global HBM Chips for AI Servers Revenue million Forecast, by Country 2020 & 2033

- Table 13: Brazil HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 14: Argentina HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 15: Rest of South America HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 16: Global HBM Chips for AI Servers Revenue million Forecast, by Application 2020 & 2033

- Table 17: Global HBM Chips for AI Servers Revenue million Forecast, by Types 2020 & 2033

- Table 18: Global HBM Chips for AI Servers Revenue million Forecast, by Country 2020 & 2033

- Table 19: United Kingdom HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 20: Germany HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 21: France HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 22: Italy HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 23: Spain HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 24: Russia HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 25: Benelux HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 26: Nordics HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 27: Rest of Europe HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 28: Global HBM Chips for AI Servers Revenue million Forecast, by Application 2020 & 2033

- Table 29: Global HBM Chips for AI Servers Revenue million Forecast, by Types 2020 & 2033

- Table 30: Global HBM Chips for AI Servers Revenue million Forecast, by Country 2020 & 2033

- Table 31: Turkey HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 32: Israel HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 33: GCC HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 34: North Africa HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 35: South Africa HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 36: Rest of Middle East & Africa HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 37: Global HBM Chips for AI Servers Revenue million Forecast, by Application 2020 & 2033

- Table 38: Global HBM Chips for AI Servers Revenue million Forecast, by Types 2020 & 2033

- Table 39: Global HBM Chips for AI Servers Revenue million Forecast, by Country 2020 & 2033

- Table 40: China HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 41: India HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 42: Japan HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 43: South Korea HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 44: ASEAN HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 45: Oceania HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

- Table 46: Rest of Asia Pacific HBM Chips for AI Servers Revenue (million) Forecast, by Application 2020 & 2033

Frequently Asked Questions

1. What is the projected Compound Annual Growth Rate (CAGR) of the HBM Chips for AI Servers?

The projected CAGR is approximately 70.2%.

2. Which companies are prominent players in the HBM Chips for AI Servers?

Key companies in the market include SK Hynix, Samsung, Micron Technology, CXMT, Wuhan Xinxin.

3. What are the main segments of the HBM Chips for AI Servers?

The market segments include Application, Types.

4. Can you provide details about the market size?

The market size is estimated to be USD 2537 million as of 2022.

5. What are some drivers contributing to market growth?

N/A

6. What are the notable trends driving market growth?

N/A

7. Are there any restraints impacting market growth?

N/A

8. Can you provide examples of recent developments in the market?

N/A

9. What pricing options are available for accessing the report?

Pricing options include single-user, multi-user, and enterprise licenses priced at USD 4900.00, USD 7350.00, and USD 9800.00 respectively.

10. Is the market size provided in terms of value or volume?

The market size is provided in terms of value, measured in million.

11. Are there any specific market keywords associated with the report?

Yes, the market keyword associated with the report is "HBM Chips for AI Servers," which aids in identifying and referencing the specific market segment covered.

12. How do I determine which pricing option suits my needs best?

The pricing options vary based on user requirements and access needs. Individual users may opt for single-user licenses, while businesses requiring broader access may choose multi-user or enterprise licenses for cost-effective access to the report.

13. Are there any additional resources or data provided in the HBM Chips for AI Servers report?

While the report offers comprehensive insights, it's advisable to review the specific contents or supplementary materials provided to ascertain if additional resources or data are available.

14. How can I stay updated on further developments or reports in the HBM Chips for AI Servers?

To stay informed about further developments, trends, and reports in the HBM Chips for AI Servers, consider subscribing to industry newsletters, following relevant companies and organizations, or regularly checking reputable industry news sources and publications.

Methodology

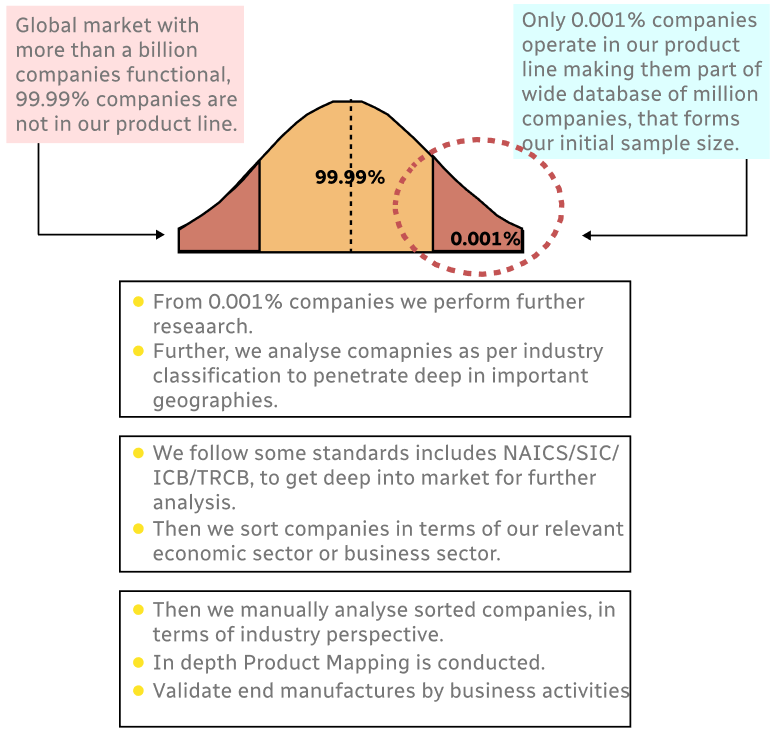

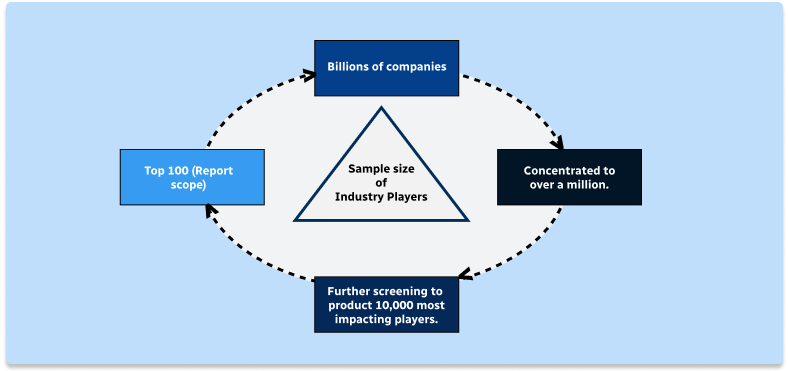

Step 1 - Identification of Relevant Samples Size from Population Database

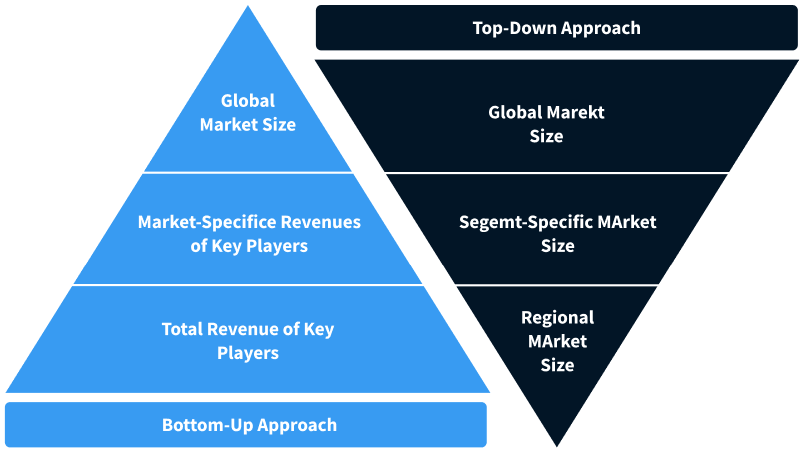

Step 2 - Approaches for Defining Global Market Size (Value, Volume* & Price*)

Note*: In applicable scenarios

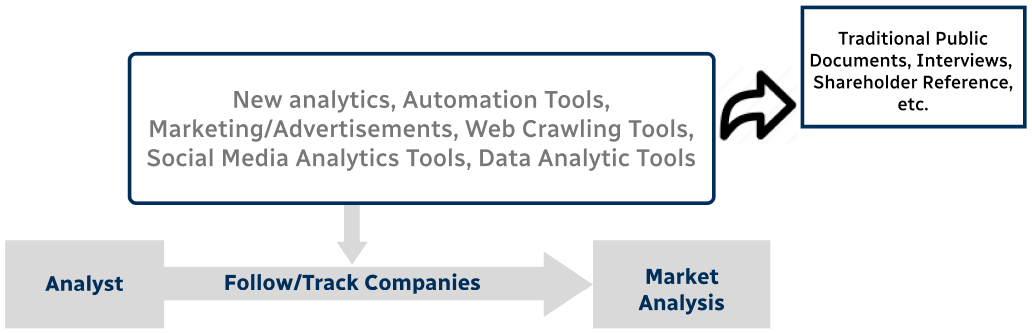

Step 3 - Data Sources

Primary Research

- Web Analytics

- Survey Reports

- Research Institute

- Latest Research Reports

- Opinion Leaders

Secondary Research

- Annual Reports

- White Paper

- Latest Press Release

- Industry Association

- Paid Database

- Investor Presentations

Step 4 - Data Triangulation

Involves using different sources of information in order to increase the validity of a study

These sources are likely to be stakeholders in a program - participants, other researchers, program staff, other community members, and so on.

Then we put all data in single framework & apply various statistical tools to find out the dynamic on the market.

During the analysis stage, feedback from the stakeholder groups would be compared to determine areas of agreement as well as areas of divergence